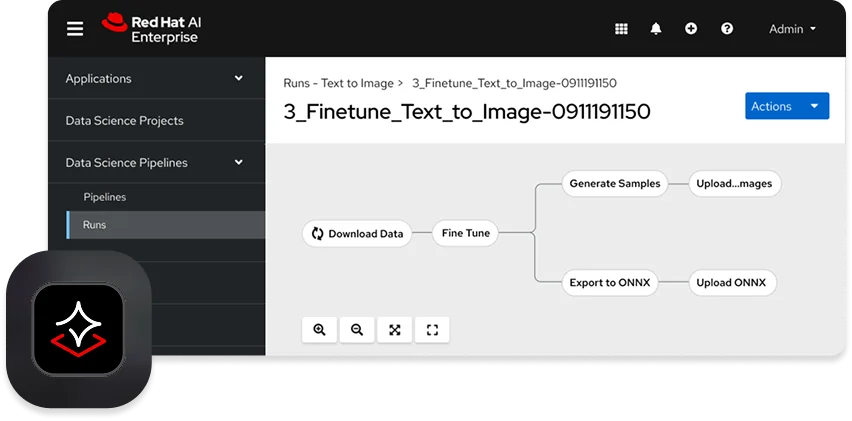

Red Hat has launched Red Hat AI Enterprise, a new platform designed to enhance the deployment and management of AI technologies. This initiative comes at a time when numerous organizations struggle with stalled AI pilot projects due to a lack of cohesive tools and infrastructure. The platform aims to facilitate AI integration by providing standardized environments that support model customization, tuning, and deployment across different hardware configurations.

Key features of Red Hat AI Enterprise include optimized runtimes for efficient model serving and a standardized API layer through the Llama Stack. Additionally, it supports the Model Context Protocol (MCP) for external system connections. The platform also emphasizes operational safety with tools for drift detection, bias monitoring, and model explainability, including the RAGAS evaluation framework.

Furthermore, Red Hat announced an update to its existing platform, Red Hat AI 3.3, which introduces compressed versions of models like Mistral-Large-3 and expands hardware compatibility by supporting AMD MI325X accelerators. This update includes a technology preview for Models-as-a-Service (MaaS), enabling IT teams to provide self-service access to hosted models.