Meta is set to enhance its artificial intelligence capabilities with the introduction of four new chip generations, namely the MTIA 300, MTIA 400, MTIA 450, and MTIA 500, developed in collaboration with Broadcom. The deployment of these chips is planned over the next two years, reflecting a strategic shift towards improving AI performance through rapid development cycles.

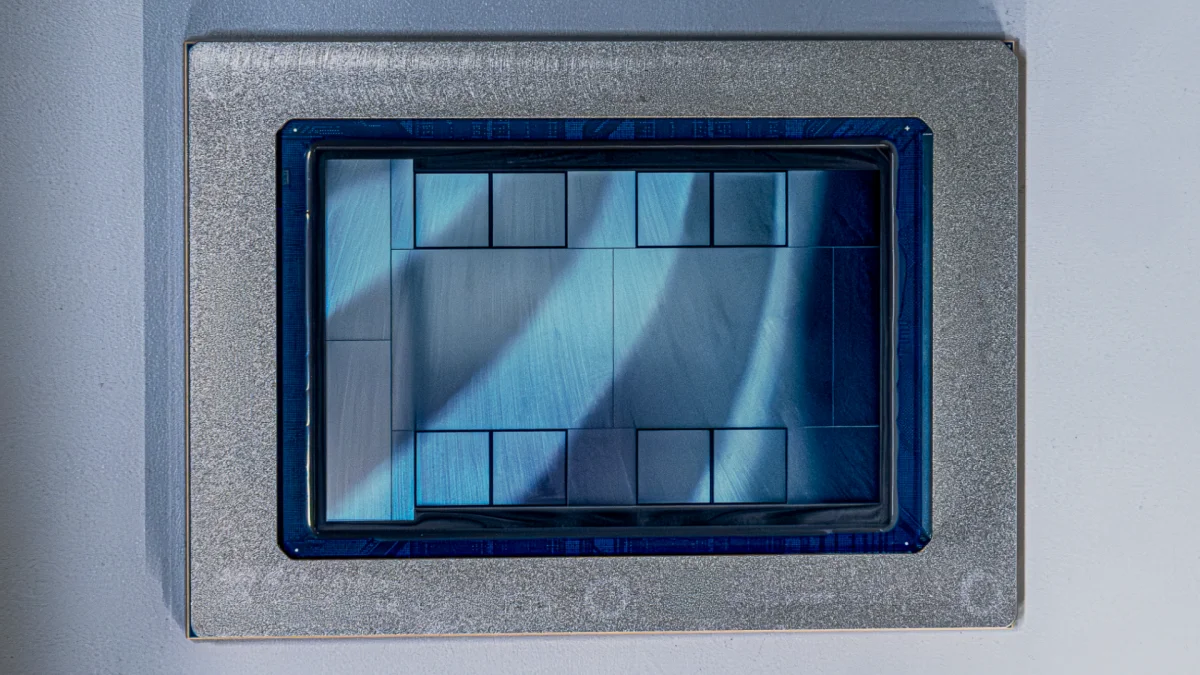

The MTIA 300 is currently in production and focuses on ranking and recommendations, while the MTIA 400 is progressing through lab tests before being deployed in data centers. Anticipated for mass deployment in early and late 2027, the MTIA 450 and 500 are tailored for AI inference, boasting significant advancements in performance metrics. For example, the MTIA 500 is expected to achieve up to 30 PFLOPS of peak performance and a substantial increase in HBM bandwidth.

Meta highlights that the MTIA 450 doubles the HBM bandwidth of the MTIA 400, improving performance against leading commercial products such as Nvidia’s H100 and H200. Innovations include hardware acceleration for specific tasks and custom data types optimized for inference, which are set to address the current limitations in mainstream GPU capabilities.