Meta Platforms is embarking on a major initiative to bolster its artificial intelligence (AI) capabilities by introducing four custom-designed chips aimed at optimizing AI workloads. This move highlights the company’s strategy to enhance its hardware resources and reduce reliance on external manufacturers such as Nvidia and AMD.

These new chips are tailored to meet the demands of complex AI tasks, which require substantial computational power. The first chip, known as the AI Training Chip, focuses on accelerating the training of large neural networks, while the second, the AI Inference Chip, is designed for making real-time predictions efficiently. The third chip, the Edge AI Chip, intends to facilitate AI functionalities directly on user devices, enhancing experiences in augmented and virtual reality.

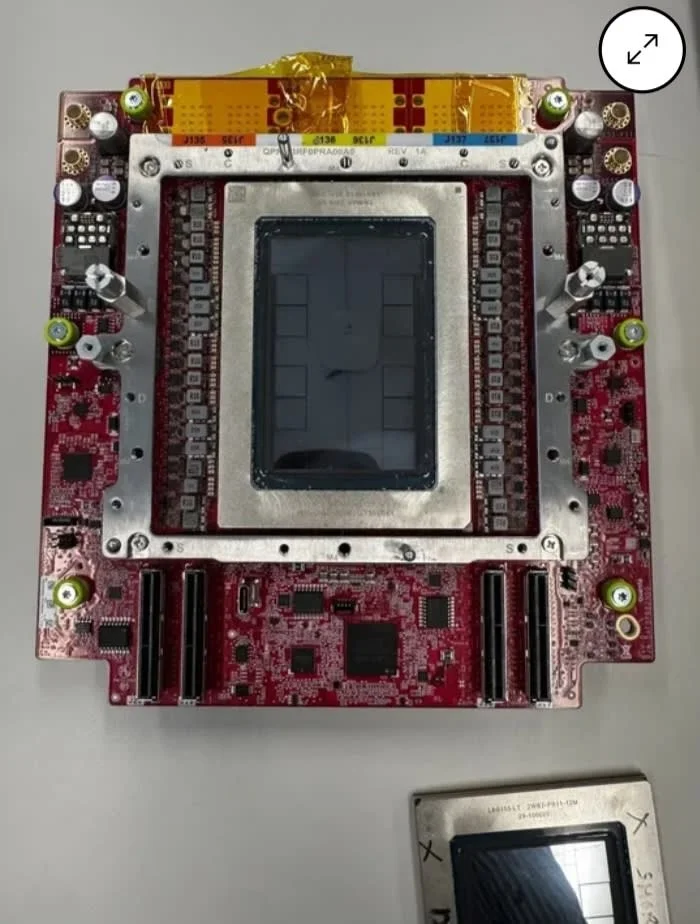

Lastly, the Data-Center Accelerator Chip aims to further boost Meta’s AI infrastructure within its data centers. Although specific specifications for these chips have not been publicly released, their development is expected to significantly enhance Meta’s AI tools and platforms, including Facebook, Instagram, and WhatsApp.