As demand for AI computing power surges, industry analysts predict that global data center capacity will see double-digit growth rates in the coming years. The expansion of AI-related traffic is set to mirror this trend, significantly impacting infrastructure needs.

Organizations are increasingly prioritizing improvements to their network architectures to support AI workloads, hybrid cloud strategies, and distributed computing environments. To address the growing requirements, the construction of hyperscale data centers is being accelerated.

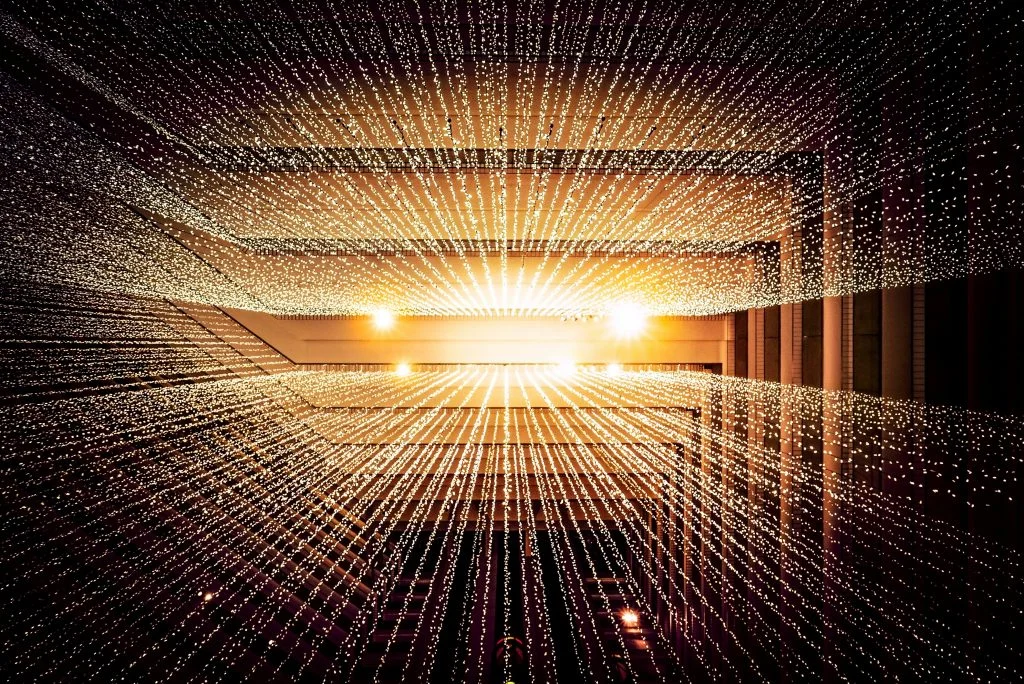

However, a crucial yet often overlooked factor is the physical network infrastructure that underpins AI performance. High-capacity fiber optic networks, advanced cooling technologies, and carrier-grade data centers are essential for effective AI operation, particularly during both the training and inference phases.

Modern AI models require extensive data flows, often in petabytes, to connect storage arrays and GPU clusters. This need for robust infrastructure is exacerbated by the increasing enterprise migration to cloud services and the corresponding rise in edge computing to reduce latency.